If you have anything to do with a university, you are probably above such childish things as university rankings. Just to explain to my esteemed readers what other less refined people get up to: university rankings attempt to assess universities according to the quality of their research and, if desperate, by the quality of their teaching. Research is assessed by publications in quality journals (quality being assessed by impact factors, which is a separate issue) and research grants obtained, or number of Nobel Prizes, or similar shibboleths of scholarship. Teaching is assessed by student surveys and, to scrape the barrel, by mentioning the quality of the student experience: whether they have ever met a member of staff, had an essay marked or been taught anything; silly stuff like that.

All this is good fun, and to save you looking it up, University College London generally does well on international rankings.

QS World University Rankings 2016-17

- Massachusetts Institute of Technology (MIT)

- Stanford University

- Harvard University

- University of Cambridge

- California Institute of Technology (Caltech)

- University of Oxford

- University College London

- ETH Zurich

- Imperial College London

- University of Chicago

So what? The first three are in the US, which is very wealthy and can attract global talent and fund research. There is some first mover advantage, so Oxford and Cambridge do well, by attracting talent and legacies over 8 centuries. UCL and Imperial are in reasonably wealthy England. 9 of the top 10 were created by the English and their descendants. Not a bad legacy for one damp island.

However, whatever happened to Salamanca, Bologna, Padua, Naples, Siena, Valladolid, Macerata, Coimbra, and other first mover European centres of ancient learning, who should be well ahead of the pack? Well, they weren’t English, for a start. They fell by the wayside for the lack of something: Italy lost its early start, and the common factor for all of them may have been the lack of an industrial revolution, or perhaps a prevention of curiosity under Catholicism, or fundamental deep disorders in the Mediterranean European psyche: too much time on the beach, lack of proper governments, or even the lack of the civilizing impact of sharp winters and Protestant bloody-minded rejection of authority, which engender the required Northern cunning and dedication to truth as an absolute value. Something was missing among the continentals.

The OECD have entered the fray, by asking what is for them a seditious question: how do university rankings relate to student quality? The BBC has ventured to summarise their answer: Not all that much.

http://www.bbc.co.uk/news/business-37649892

Anyway, here is the BBC’s list of highest performing graduates, drawn from OECD data. Surreptitiously I have added another figure to the countries, which neither the OECD or the BBC mentioned.

The OECD's top 10 highest performing graduates

- Japan 105

- Finland 97

- Netherlands 100

- Australia 100

- Norway 100

- Belgium 99

- New Zealand 100

- England 100

- United States 98

- Czech Republic 98

Japan is out ahead, all the rest are Greenwich Mean Intelligence (100), with little variation. China is not on the list, but would rank close to Japan.

Student ability is a dangerous concept because the OECD does not mention intelligence. If you put “OECD” into the blog search bar you will get all the mentions I have made to their work, but here is a starter here below, with two others to give you a flavour of the way the OECD argues:

https://drjamesthompson.blogspot.co.uk/2013/10/how-illiterate-is-oecd.html

https://drjamesthompson.blogspot.co.uk/2013/12/oecd-children-become-oecd-adults.html

https://drjamesthompson.blogspot.co.uk/2014/01/pisa-goes-to-us-finds-little-bang-for.html

The BBC article, though interesting, raises an easily formulated explanation: the best universities now reflect a blend of early starters and mostly the English who kept the flame of the Enlightenment alive.

One can have very bright students, but still live in a country where universities have yet to generate sufficient research to draw in students from other countries. Eventually the new universities will reflect the global hinterland of talent. On intelligence alone then the cities of China will lead the way, so long as they are curious, disputative, and not led by any political party or over-weaning government.

Meantime, do universities add value? The BBC references a gargantuan OECD publication “Education at a glance 2016” which should bludgeon the average reader into submission. Just reading the contents list will make your head spin. This is what a large budget can bring you. Be warned: they have an explicit agenda which they list on page 15, and although these are noble aims, they include eliminating gender disparities and inculcating sustainable development and cultural diversity. All countries get graded on their progress to the correct curricula. Designing a Maths course to gain their approval might be tricky, particularly if the highest achievers are always Asian men. Perhaps education would be better with less cultural diversity: just imitate the Chinese.

The Report complains: More women than men are now tertiary graduates. But women are still less likely to enter and graduate from more advanced levels of tertiary education, such as doctoral or equivalent programmes. Women remain under-represented science and engineering, and over-represented in others, such as education and health. In 2014 there were, on average, three times more men than women who graduated with a degree in engineering and four times more women than men who graduated with a degree in the field of education. Graduates in engineering earn about 10% more than other tertiary-educated adults, on average, while graduates from teacher training and education science earn about 15% less.

Don’t dare to suggest that women may have different interests to men, and also different cognitive strengths and weaknesses.

Here are their summary comments on immigration:

Immigrants are less likely to participate at all levels of education. Immigrant students who reported that they had attended pre-primary education programmes score 49 points higher on the OECD Programme for International Student Assessment (PISA) reading test than immigrant students who reported that they had not participated in such programmes. This difference corresponds to roughly one year of education. In most countries, however, participation in pre-primary programmes among immigrant students is considerably lower than it is among students without an immigrant background. In many countries immigrants lag behind their native-born peers in educational attainment. For example, the share of adults who have not completed upper secondary education is larger among those with an immigrant background.

The clear implication is that immigrants should be encouraged to get educated, and then they will be as able as the locals to contribute to their country’s economies. However, if brighter immigrant parents make sure their children children start learning early, some of 49 points apparently gained may be due to selection.

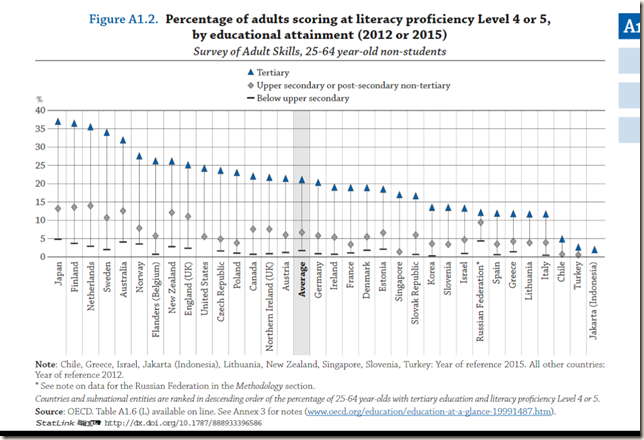

After those preliminaries, now let us turn to the issue highlighted by the BBC article in which the OECD's top 10 highest performing graduates are listed. I think (but cannot be sure) that it is taken from the Table A1.2 shown below:

For some reason the BBC list drops Sweden. To my eye the Premier League of top students as regards “literacy” are to be found in Japan, Finland, Netherlands, Sweden and Australia. Norway to Slovakia are in the First Division, Korea to Italy in the Second Division, and Chile, Turkey and Indonesia lead the vast Third World.

What does “literacy” mean in this context? It means comprehension and the ability to handle concepts, something requiring verbal intelligence, a taboo concept for the OECD.

At level 4 tasks involve retrieving information which require the reader to locate and organize several pieces of embedded information. Some tasks at this level require interpreting the meaning of nuances of language in a section of text by taking into account the text as a whole. Other interpretative tasks require understanding and applying categories in an unfamiliar context. Reflective tasks at this level require readers to use formal or public knowledge to hypothesize about or critically evaluate a text. Readers must demonstrate an accurate understanding of long or complex texts whose content or form may be unfamiliar.

To really answer the question as to whether universities add value, here are some research possibilities. First, one could compare the national literacy measures against the rankings for all the national universities. This would be rough and ready, but informative. Second, and far better, one would look at the broad range of adult skills, and adult earnings, for students of high ability according to whether or not they went to university.

Here is the way I summarised whether secondary schools add value to primary schools when I discussed it in December 2013 . The same method should be applied to evaluating whether universities add value.

So, we know that the education systems of many countries ten years ago were not turning out uniformly capable citizens, and to the extent that today’s student results are roughly in line with the previous decade’s results, they will not be turning out uniformly capable citizens now. This is because there is a bell curve of ability and because social and because educational systems vary in their effectiveness. Discriminating the relative contributions of these two factors is well nigh impossible unless you take measures of cognitive ability, preferably pre-school, but certainly early in life and including ability at 11 years of age, and then you test their attainments at age 15/16. Basically, if you know what children can do at the end of primary school you are in a good position to see what benefit they get from secondary education. Without those facts, interpretation of educational interventions will be prone to considerable error.

At the moment the snapshot of education in the OECD 2016 report does not answer the question, though it is worth investigating. Enough of this. Go back to marking essays.

Also worth bearing in mind that the quality of graduates will also be influenced by the percentage of the population going into tertiary education (and the efficiency with which this process takes place).

ReplyDeleteJapan is also third (after Canada and Russia) in it terms of the tertiary enrollment rate, so its being at the top of literacy proficiency scale nonetheless is doubly impressive.

Agree. I did not go into that since you can get an apparently high tertiary enrollment by relaxing the qualification required by both students and university courses. Debased degrees not much use: apprenticeships very probably much better.

ReplyDeleteOne of the striking features of the list is the absence of the country - or perhaps I should say culture-area - which had, for a century and more, what was universally recognised as the best university system in the world.

ReplyDeleteYes. Perhaps it was related to British Universities suddenly doing better in the 1930s.

ReplyDeletehttp://www.sciencealert.com/no-the-universe-is-not-expanding-at-an-accelerated-rate-say-physicists

ReplyDeleteNobel prizes? Oops.

Nobel prize is like jury verdict for diagnosis of cancer. Thanks God that patient's life is not depending on people's voting but specific diagnosis of qualified physicians.

Re: how do university rankings relate to student quality? ... Not all that much.

ReplyDeleteWhy? http://www.chrisclaassen.com/University_rankings.pdf

"""three of the six international ranking systems show bias toward the universities in their home country."""

Different university ranking systems are targeting different audiences. The QSRank appears to be geared towards students with heavy dose of subjective university reputations among academics and employers (50% weight). Times Higher Edu Ranking also has parameters which might interest the edu policy makers.

Subjective reputation scores sometimes can change fast wrt the rate of change rather than the absolute values of some other objective measures. For example from NatureIndex the scientific journal outputs from Harvard

though still top of the list but has been droping over the years. From the trends it is estimated that Stanford will overtake Harvard in 4.16 years as the top science university but in QSRank Stanford is already ahead

of Harvard. Again from NatureIndex trends Oxford is estimated to overtake Harvard in 6.32 years but THERank already placed Oxford at the top.

dux.ie

Agree that any ranking system will have its own peculiarities, but the general pattern is reasonably consistent, and the same institutions almost always figure in the top ranks, though the positions alter. Giving the scores would be better, but people seem to love rankings. The main wild card is student experience measures, which have in my view been put in as consolation prize for universities which do not do research.

ReplyDeletePage 48 of the OECD report contains interesting chart...

ReplyDelete"student experience measures, which have in my view been put in as consolation prize for universities which do not do research." And yet Joe Bloggs pays his taxes, and pays his children's university fees, under the belief that teaching is the central business of a university. The academics, meanwhile, believe that the central purpose of a university is to pay them to pursue their hobbies.

ReplyDeleteEventually this contrast will lead to a Dissolution of the Monasteries.

Re: Female in STEM universities

ReplyDeleteTHE has provided university gender data and combines with data from NatureIndex the training of female in scientific areas can be investigated. From the list of 25 top %female univeristies only 4 were above the cutoff to be listed in NI. Of interest is the Soochow University with the highest % of female students (78%) and it is ranked at 78 (WFC=108.47) in NatureIndex's top 500 science universities.

Overall China has male dominated universities. Historically Soochow University started off as a teacher college and in 2012 its score was below the cutoff to be listed. However, it is located in the Jiangnam/Gangnam area with long history of producing intellectual elites, and it is also in competition with the nearby Nanjing University. The government directive 211 managed to propel its performance upward without changing the gender ratio much.

https://en.wikipedia.org/wiki/Soochow_University_%28Suzhou%29

In three years it has leapfroged ahead of many well known universities like Boston Uni (WFC=96.94, %F=58%), Uni of Utah (WFC=92.46, %F=45%), Indiana University (WFC=92.36, %F=53%), etc. Though the demographics of the faculty are not known, professors do the grant works and the graduate students to the grunt works. A more up to date breakdown of the WFC scores are life science 6.01, chemistry 78.87 and physical sciences 35.50.

http://www.natureindex.com/institution-outputs/china/soochow-university/513906bc34d6b65e6a0002e9

List of high performing science universities (WFC greater than 100) with high female gender ratio (greater than 60%), compares to some other universities where D15_12=WFC15-WFC12 :

GPI FPct WFC15 D15_12 NIRank THERank Inst

3.55 78 108.47 52.43 78 501-600 Soochow University China

2.03 67 125.32 12.69 61 120 University of Copenhagen Denmark

1.56 61 105.56 -8.88 82 =137 University of Geneva Switzerland

...

1.27 56 214.83 20.27 30 15 University College London United Kingdom

0.85 46 253.62 84.49 20 201-250 Nanjing University China

1.13 53 300.39 88.32 11 29 Peking University China

0.85 46 390.54 -37.12 6 4 University of Cambridge United Kingdom

0.85 46 398.38 52.25 5 1 University of Oxford United Kingdom

0.72 42 530.83 48.84 2 3 Stanford University United States

0.89 47 772.33 -125.36 1 6 Harvard University United States

dux.ie

Thank you very much. Would you like to work this up into an essay I could post up?

ReplyDelete